Towards understanding the logical reasoning of AI models

Making AI explain itself in the same way, people explain themselves

Almost every day, we interact with artificial intelligence (AI) without even thinking about it, when we get shopping suggestions online, when our phones suggest the next word we want to type, or when we chat with customer service bots. These systems are powered by AI models containing millions of parameters and mathematical functions. While they can produce impressive results, they often work like a “black box,” leaving users unsure how they arrived at a specific answer.

Researchers at BIFOLD have been exploring how to make AI explain itself in the same way, people explain themselves. The team’s work focuses on making AI predictions as clear and intuitive as a human explanation. They recently published their results in the journal Information Fusion.

“If I ask, ‘Why are you taking an umbrella?’ a natural answer would be, ‘Because it’s raining and I want to go outside.'

Humans explain their decisions logically, and we wanted AI to do the same.” Thomas Schnake, co-author of the study.

The idea of making AI models human-readable is not new. One established approach, known as symbolic AI, represents knowledge explicitly through symbols, rules, and logic, often in the form of simple “IF… THEN” statements. For example, a symbolic system might encode the rule: IF it is raining AND I want to go outside, THEN take an umbrella. Which means if you ask this model what to do if it rains and you want to go outside, it suggests taking an umbrella.

In contrast to today’s widely used statistical AI models, symbolic AI relies on interpretable, rule-based reasoning rather than many opaque mathematical operations and representations. When such rules are short, the model’s reasoning can be understood simply by reading them. However, symbolic systems typically have limited learning capacity and scale poorly. As a result, they are mainly applied in domains where reproducibility, transparency, and controllability are more important than predictive performance, which can apply to medical, legal, or eligibility decision systems.

Symbolic AI Systems imitate Neural Network's Behavior

The field of explainable AI (XAI) tries to make modern AI models more transparent. One popular method is to highlight which parts of the input matter most for a prediction - like using a heat map to show the most important words in a document or the most important areas in an image. These heat maps are often enough to give users a rough idea of what the AI is focusing on. But if we want a detailed, logical breakdown of the model’s decision-making process, heat maps alone fall short.

Another way to explain a model’s prediction is to build a symbolic AI system that imitates the neural network’s behavior using “IF… THEN” rules. These rules are easy to understand but only mimic the network’s decisions, they don’t reveal how the original model actually works. That means they can’t provide deep insight into the AI’s internal logic.

The BIFOLD team extended an existing technique called Layer-wise Relevance Propagation (LRP), which calculates how important each connection in the network is for a prediction. They then used those importance scores to discover logical relationships between parts of the network - a method they call Symbolic XAI. This allowed them to extract rules that closely match how the AI actually makes decisions, rather than just imitating its behavior.

With Symbolic XAI, the model’s strategy can be expressed as logical formulas that directly reflect its internal workings.

“This method lets us combine the learning power of neural networks with the clarity of symbolic reasoning.

We can now see which logical formula best summarizes the model’s predictions.” Thomas Schnake

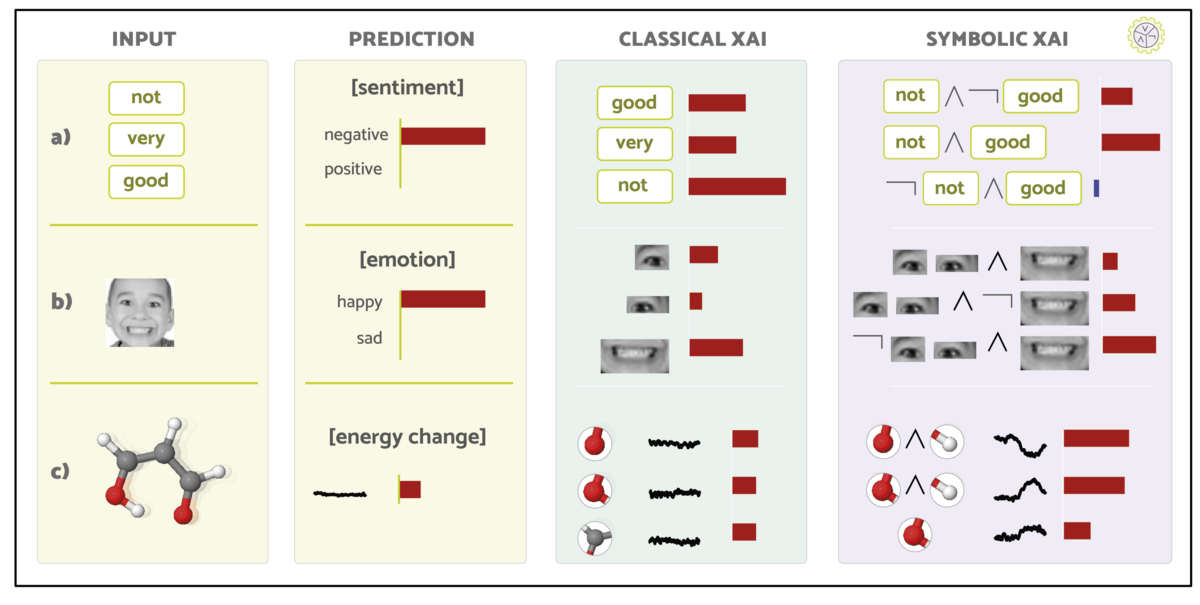

Classic XAI measures how strongly the presence of individual input features contributed to the prediction. With the new method (Symbolic XAI), it is additionally possible to measure how strongly the relationships between input features contributed to the prediction and how the absence of input features would have influenced the prediction. In the figure, the size of the red and blue bars indicates whether a contribution was positive (red) or negative (blue) with respect to the prediction; negative means that it argues against the prediction. To describe the relationships between input features in the new method (Symbolic XAI), well-known logical symbols are used.

The symbol ∧ describes a conjunctive relationship. If the formula “not ∧ good” contributed positively to the prediction, this means that the words “not” and “good” must occur together to produce this contribution, and that separately they can have a completely different influence. This makes sense for the prediction that “not very good” has a negative character, since the combination of the negation of a positive word plays the essential role here.

The symbol ¬ is a logical negation and is read in the formula as the absence of input features. The colored bar next to the formula “¬ not ∧ good” describes what contribution the word “good” would have had in the absence of “not”. This contribution is negative, which makes sense, since the word “good” without “not” would argue against the prediction that a negative sentiment was expressed in the sentence.

The researchers performed different tests with their method on text, images, and molecular data. They found that AI systems often learn reliable rules on their own, even without being trained to follow them. This approach could be especially useful in fields where it is essential that the AI’s reasoning is explainable, such as medicine or security, to ensure the rules it follows are correct.

Publication:

Thomas Schnake, Farnoush Rezaei Jafari, Jonas Lederer, Ping Xiong, Shinichi Nakajima, Stefan Gugler, Grégoire Montavon, Klaus-Robert Müller: “Towards symbolic XAI — explanation through human understandable logical relationships between features"