Prof. Dr. Konrad Rieck

Research Group Lead

Affiliation: BIFOLD

Konrad Rieck is a Professor of Computer Science at TU Berlin and BIFOLD, where he heads the Chair of Machine Learning and Security. Previously, he held positions at TU Braunschweig, the University of Göttingen, and the Fraunhofer Institute FIRST. In 2024, he served as a Guest Professor at TU Wien.

His research focuses on the intersection of computer security and machine learning. His group develops novel methods for detecting computer attacks, analyzing malicious software, and discovering security vulnerabilities. In addition, they investigate the security and privacy of learning algorithms. He is also interested in efficient algorithms for analyzing structured data, including strings, trees, and graphs.

- ACSAC Test-of-Time Award, 2025

- CCS Distinguished Paper Award, 2025

- USENIX Security Distinguished Paper Award, 2024

- IEEE S&P Test-of-Time Award, 2024

- USENIX Security Distinguished Paper Award, 2022

- ERC Consolidator Grant, 2021

- AISEC Best Paper Award, 2021

- Winner, Microsoft MLSEC Competition, 2020

- LehrLeo: Best Lecture at TU Braunschweig, 2019

- LehrLeo: Best Lab Course at TU Braunschweig, 2019

- German Prize for IT-Security (2nd Place), 2016

- DIMVA Best Paper Award, 2016

- Google Faculty Research Award, 2014

- ACSAC Outstanding Paper Award, 2012

- CAST/GI Dissertation Award IT-Security, 2010

Adversarial Machine Learning

Konrad Rieck’s group investigates the security and privacy of machine learning systems with the goal of building models that remain reliable under adversarial manipulation, including adversarial examples, data poisoning, and backdoor attacks. They study how such threats emerge in real-world deployments and develop principled methods to mitigate their impact. A defining feature of their work is the integrated attacker–defender perspective: by systematically analyzing attack strategies, they uncover structural weaknesses in models, training procedures, and computational backends. These insights inform the design of robust training approaches, detection mechanisms, and evaluation frameworks grounded in realistic threat models.

Intelligent Security Systems

The group develops intelligent security systems for automatically detecting and analyzing threats, spanning attack detection, malware analysis, and vulnerability discovery. Their research emphasizes learning-based methods that adapt to evolving environments rather than relying on static signatures or predefined rules. The objective is to design scalable and adaptive protection mechanisms suitable for complex settings such as enterprise networks, industrial control systems, and large software ecosystems. In parallel, the group examines fundamental challenges including generalization, robustness, and the methodological limits of machine learning in security-critical applications.

Novel Attacks and Defenses

The group approaches offensive and defensive security research as complementary drivers of progress. By identifying previously unknown vulnerabilities, they reveal weaknesses that might otherwise remain unnoticed, and they develop concrete defenses to address emerging threats. Their work combines large-scale empirical studies with technically sophisticated attack constructions, analyzing how modern technologies, such as large language models and multimedia processing pipelines, can be exploited in practice. This research is grounded in realistic attacker models and rigorous evaluation, contributing to a deeper understanding of evolving attack surfaces and effective countermeasures.

- European Laboratory for Learning and Intelligent Systems (ELLIS)

- BMBF Plattform Lernende Systeme: WG 3 IT Security, Privacy, Legal and Ethical Framework

- Editorial Board, ACM Transactions on AI Security and Privacy (TAISAP), 2025–2028

- Editorial Board, Journal of Machine Learning Research (JMLR), 2013–2019

- Guest Editor, Special Issue “Autonomous AI Agents in Computer Security,” IEEE S&P, 2025

- Guest Editor, Special Issue “Adversarial Machine Learning,” OJSP, 2023

- Guest Editor, Special Issue “Vulnerability Analysis,” Information Technology, 2016

- Guest Editor, Special Issue “Threat Detection, Analysis and Defense,” JISA, 2013

- Jury Member, German IT Security Award, 2022, 2024, 2026

- Jury Member, CAST/GI Dissertation Award IT-Security, 2016–2025

- Jury Member, Dutch Cyber Security Research Paper Award, 2018

- Steering Committee, IEEE Workshop on Deep Learning and Security (DLS)

- Steering Committee, ACM Workshop on Robust Malware Analysis (WORMA)

- Steering Committee, SIG Intrusion Detection and Response (SIDAR)

Thorsten Eisenhofer, Erwin Quiring, Jonas Möller, Doreen Riepel, Thorsten Holz, Konrad Rieck

No more Reviewer #2: Subverting Automatic Paper-Reviewer Assignment using Adversarial Learning

Alexander Warnecke, Lukas Pirch, Christian Wressnegger, Konrad Rieck

Machine Unlearning of Features and Labels

Daniel Arp, Erwin Quiring, Feargus Pendlebury, Alexander Warnecke, Fabio Pierazzi, Christian Wressnegger, Lorenzo Cavallaro, Konrad Rieck

Dos and Don’ts of Machine Learning in Computer Security

Alexander Warnecke, Daniel Arp, Christian Wressnegger, Konrad Rieck

Evaluating Explanation Methods for Deep Learning in Security

Erwin Quiring, David Klein, Daniel Arp, Martin Johns, Konrad Rieck

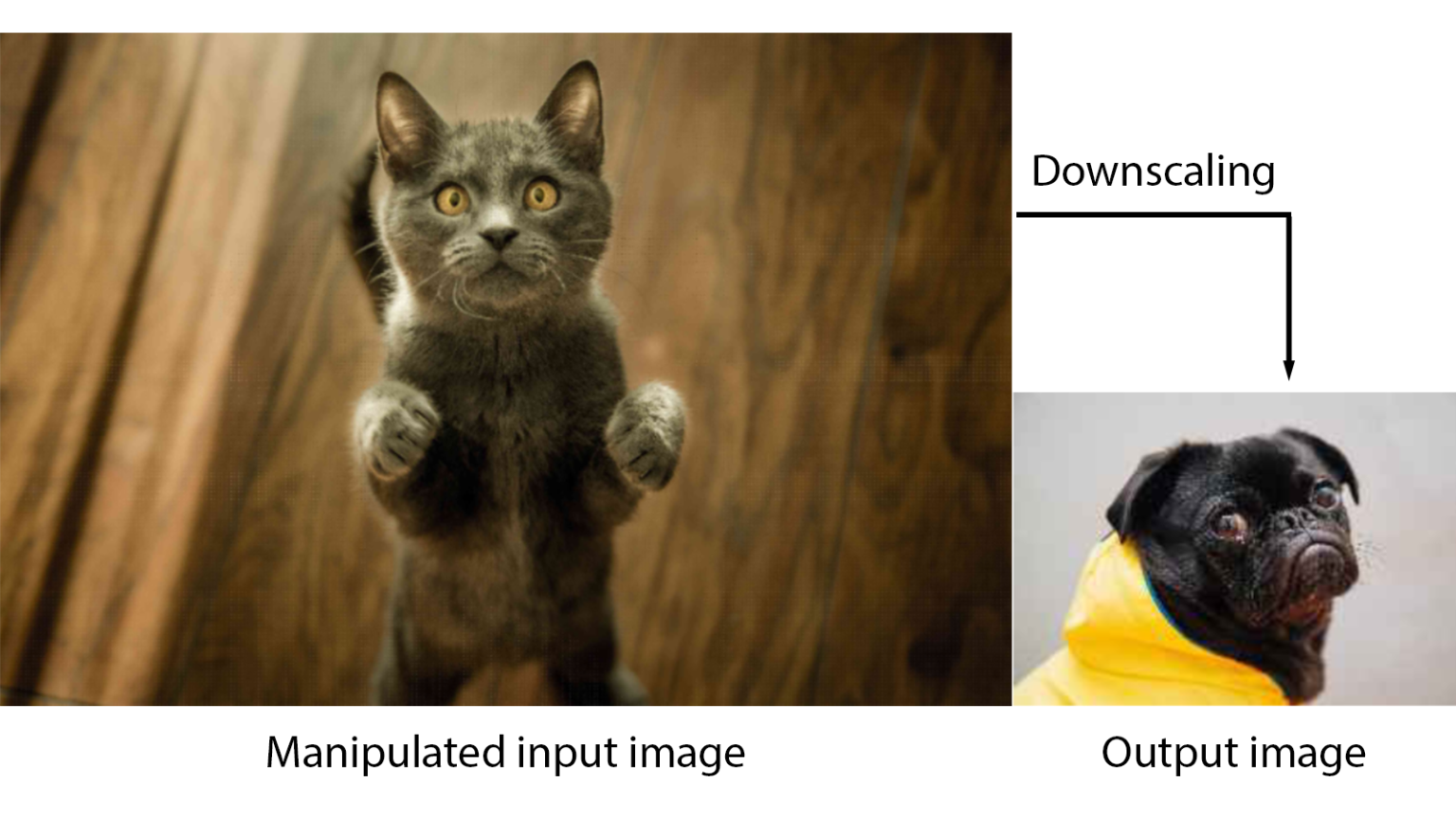

Adversarial Preprocessing: Understanding and Preventing Image-Scaling Attacks in Machine Learning

BIFOLD appoints new Deputy Directors

BIFOLD appoints Prof. Dr. Begüm Demir, Prof. Dr. Konrad Rieck and Prof. Dr. Alexander Meyer, as BIFOLD Deputy Directors. With this decision, BIFOLD strengthens its leadership team and underlines its commitment to advancing cutting-edge research in data-driven technologies and machine learning.

SaTML 2026 Conference Contributions

BIFOLD supports this year's IEEE SaTML, which is held from March 23 to 25 at the Technical University of Munich.

Test-of-Time Award for Konrad Rieck

Congratulations to BIFOLD Research Group Lead Konrad Rieck and his former colleagues. The Annual Computer Security Applications Conference (ACSAC) awarded the scientists the Test-of-Time Award for their publication "CUJO: Efficient Detection and Prevention of Drive by Download Attacks" (2010).

ACM CCS 2025: Distinguished Paper Award

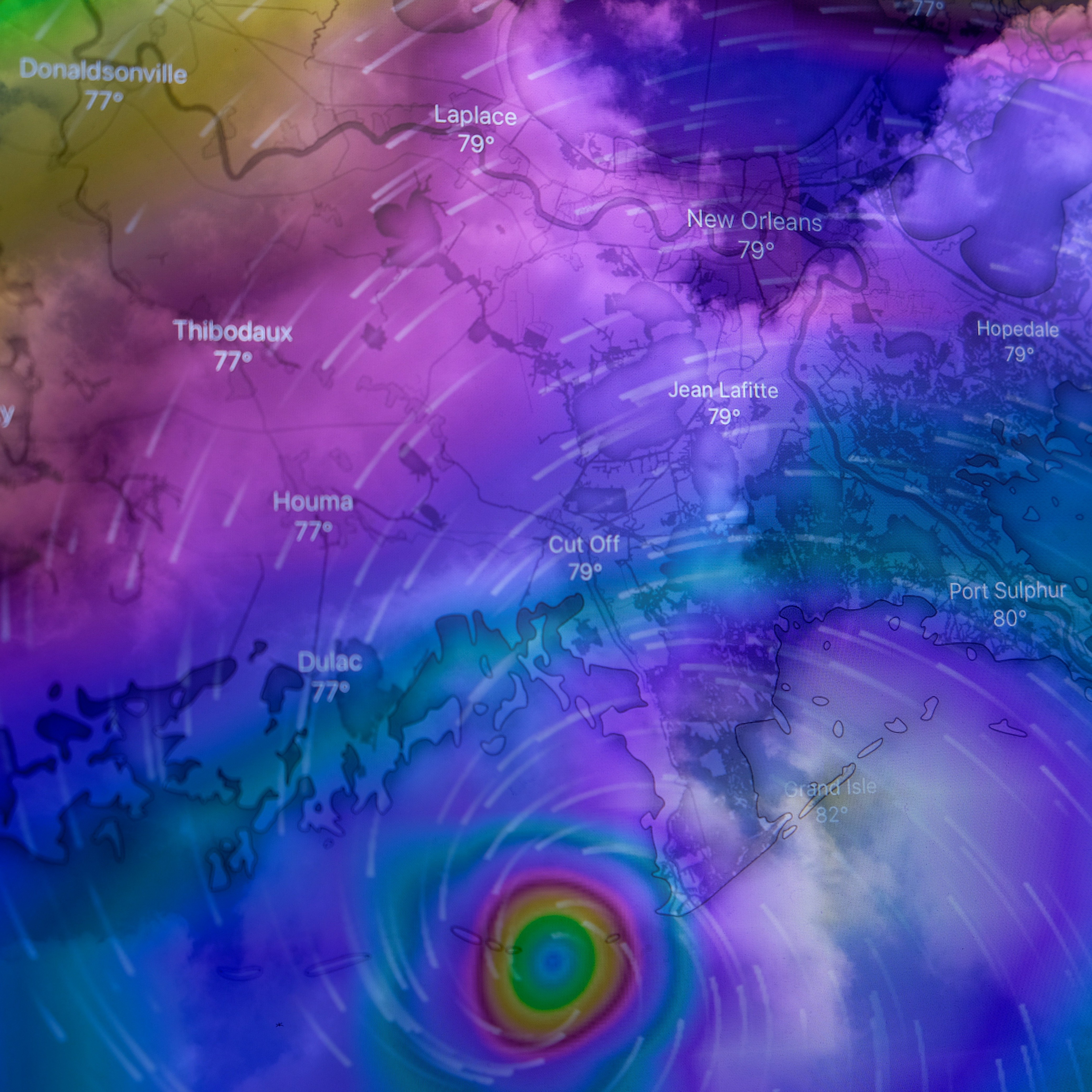

Congratulations to BIFOLD researchers Erik Imgrund, Thorsten Eisenhofer and Konrad Rieck from the ML Sec group, whose paper “Exposing Security Risks in AI Weather Forecasting” received a Distinguished Paper Award at the ACM Conference on Computer and Communications Security (CCS) 2025.

Attacking privacy leaks in virtual backgrounds

Peeking through the virtual curtain: A new study by the BIFOLD MLSEC group reveals that current virtual backgrounds in video calls can leak enough pixels from the environment to reconstruct objects in the background.

USENIX 2025 Conference Contributions

BIFOLD researchers from the MLSec group will present two papers at the 34th USENIX Security Symposium (Aug 13–15, 2025, Seattle). One paper shows that virtual backgrounds in video calls can unintentionally reveal parts of a user’s real surroundings, exposing them to privacy risks.

Even the smallest number can make a big difference

Minor deviations in backend libraries like CUDA or MKL can cause identical AI models to produce different outputs. At ICML 2025, BIFOLD researcher Konrad Rieck showed how such subtle imprecisions can be exploited—posing a significant risk to AI system security.

IEEE SaTML 2025 Conference Contribution

Dr. Thorsten Eisenhofer will present the paper “Verifiable and Provably Secure Machine Unlearning,” at SaTML 2025. Eisenhofer is Postdoc in the research group “Machine Learning and Security”. His paper introduces a new framework designed to verify that user data has been correctly deleted from machine learning models, supported by cryptographic proofs.

Machine Learning Backdoors in Hardware

So-called backdoor attacks pose a serious threat to machine learning, as they can compromise the integrity of security-critical AI systems, such as those used in autonomous driving or healthcare.

Learning from the Best

Dr. Anne Josiane Kouam is researching mobile security and privacy at BIFOLD. She's been selected as one of 200 promising young mathematicians and computer scientists to spend a week with leading experts in the field.

BIFOLD researchers present four papers at ASIACCS 2024

The 19th ACM ASIA Conference on Computer and Communications Security (ASIACCS 2024) will take place in Singapore from July 1 to July 5, 2024. The conference will focus on specific areas of computer science, such as information security and information privacy.

IEEE Test-of-Time Award for Konrad Rieck

Together with his former PhD student Fabian Yamaguchi and the entire team, Konrad Rieck won the Test-of-Time Award at the IEEE Symposium on Security and Privacy for their paper: "Modeling and Discovering Vulnerabilities with Code Property Graphs ". The IEEE Symposium on Security and Privacy is the oldest and most important conference in computer security. Each year, the "Test-of-Time Award" is given to papers that significantly influenced research and can be described as groundbreaking.

Researcher Spotlight: Dr. Alexander Warnecke

Congratulations to Dr. Alexander Warnecke who succesfully defended his PhD "Security Viewpoints on Explainable Machine Learning" on April 16, 2024.

Project Launch AIgenCY

With the innovative research project "AIgenCY - Opportunities and Risks of Generative AI in Cybersecurity," leading experts from academia and industry take on the challenge of exploring the implications of generative artificial intelligence (AI) for cybersecurity.

Email security under scrutiny: Examining SPF weaknesses

Cyber security researchers at BIFOLD found weaknesses in Sender Policy Framework (SPF) records, which protect email users from forged senders. The paper “Lazy Gatekeepers: A Large-Scale Study on SPF Configuration in the Wild” includes an analysis of 12 million domains’ SPF records and was now presented at the 2023 ACM Internet Measurement Conference.

Adversarial Papers: New attack fools AI-supported text analysis

Security researchers have found significant vulnerabilities in learning algorithms used for text analysis: With the help of a novel attack, they were able to show that topic recognition algorithms can be fooled by even small changes in words, sentences and references.

BIFOLD welcomes Israel delegation

Among other institutions the BIFOLD hosted a delegation from Israeli universities as part of Germany's "Willkommen" Visitors Programme. Various BIFOLD researchers gave a short introduction to their research foci in AI. The “Willkommen” programme invites opinion leaders to experience Germany and gain a nuanced understanding of the country.

Machine Learning and Security

Welcome to Prof. Dr. Konrad Rieck, who heads the new workgroup Machine Learning and Security at BIFOLD and TU Berlin and started January 1, 2023.

Cybersecurity under scrutiny

In cybersecurity research, machine learning (ML) has emerged as one of the most important tools for investigating security-related problems: However, a group of European researchers from TU Berlin, TU Braunschweig, University College London, King’s College London, Royal Holloway University of London, and Karlsruhe Institute of Technology (KIT)/KASTEL Security Research Labs, led by BIFOLD researchers from TU Berlin, have shown recently that research with ML in cybersecurity contexts is often prone to error.

Preventing Image-Scaling attacks on Machine Learning

BIFOLD Fellow Prof. Dr. Konrad Rieck, head of the Institute of System Security at TU Braunschweig, and his colleagues provide the first comprehensive analysis of image-scaling attacks on machine learning, including a root-cause analysis and effective defenses. Konrad Rieck and his team could show that attacks on scaling algorithms like those used in pre-processing for machine learning (ML) can manipulate images unnoticeably, change their content after downscaling and create unexpected and arbitrary image outputs. The work was presented at the USENIX Security Symposium 2020.